Blog: July 2009 Archives

Snake Oil Salesman

In cryptography, we’ve long used the term “snake oil” to refer to crypto systems with good marketing hype and little actual security. It’s the phrase I generalized into “security theater.”

Well, it turns out that there really is a snake oil salesman.

Eve Ensler on Security

Interesting TED talk by Eve Ensler on security. She doesn’t use any of the terms, but in the beginning she’s echoing a lot of the current thinking about evolutionary psychology and how it relates to security.

Nuclear Self-Terrorization

More fearmongering. The headline is “Terrorists could use internet to launch nuclear attack: report.” The subhead: “The risk of cyber-terrorism escalating to a nuclear strike is growing daily, according to a study.” In the article:

The claims come in a study commissioned by the International Commission on Nuclear Non-proliferation and Disarmament (ICNND), which suggests that under the right circumstances, terrorists could break into computer systems and launch an attack on a nuclear state triggering a catastrophic chain of events that would have a global impact.

Without better protection of computer and information systems, the paper suggests, governments around the world are leaving open the possibility that a well-coordinated cyberwar could quickly elevate to nuclear levels.

In fact, says the study, “this may be an easier alternative for terrorist groups than building or acquiring a nuclear weapon or dirty bomb themselves”.

Though the paper admits that the media and entertainment industries often confuse and exaggerate the risk of cyberterrorism, it also outlines a number of potential threats and situations in which dedicated hackers could use information warfare techniques to make a nuclear attack more likely.

Note the weasel words: the study “suggests that under the right circumstances.” We’re “leaving open the possibility.” The report “outlines a number of potential threats and situations” where the bad guys could “make a nuclear attack more likely.”

Gadzooks. I’m tired of this idiocy. Stop overreacting to rare risks. Refuse to be terrorized, people.

Another New AES Attack

A new and very impressive attack against AES has just been announced.

Over the past couple of months, there have been two (the second blogged about here) new cryptanalysis papers on AES. The attacks presented in the papers are not practical—they’re far too complex, they’re related-key attacks, and they’re against larger-key versions and not the 128-bit version that most implementations use—but they are impressive pieces of work all the same.

This new attack, by Alex Biryukov, Orr Dunkelman, Nathan Keller, Dmitry Khovratovich, and Adi Shamir, is much more devastating. It is a completely practical attack against ten-round AES-256:

Abstract.

AES is the best known and most widely used block cipher. Its three versions (AES-128, AES-192, and AES-256) differ in their key sizes (128 bits, 192 bits and 256 bits) and in their number of rounds (10, 12, and 14, respectively). In the case of AES-128, there is no known attack which is faster than the 2128 complexity of exhaustive search. However, AES-192 and AES-256 were recently shown to be breakable by attacks which require 2176 and 2119 time, respectively. While these complexities are much faster than exhaustive search, they are completely non-practical, and do not seem to pose any real threat to the security of AES-based systems.In this paper we describe several attacks which can break with practical complexity variants of AES-256 whose number of rounds are comparable to that of AES-128. One of our attacks uses only two related keys and 239 time to recover the complete 256-bit key of a 9-round version of AES-256 (the best previous attack on this variant required 4 related keys and 2120 time). Another attack can break a 10 round version of AES-256 in 245 time, but it uses a stronger type of related subkey attack (the best previous attack on this variant required 64 related keys and 2172 time).

They also describe an attack against 11-round AES-256 that requires 270 time—almost practical.

These new results greatly improve on the Biryukov, Khovratovich, and Nikolic papers mentioned above, and a paper I wrote with six others in 2000, where we describe a related-key attack against 9-round AES-256 (then called Rijndael) in 2224 time. (This again proves the cryptographer’s adage: attacks always get better, they never get worse.)

By any definition of the term, this is a huge result.

There are three reasons not to panic:

- The attack exploits the fact that the key schedule for 256-bit version is pretty lousy—something we pointed out in our 2000 paper—but doesn’t extend to AES with a 128-bit key.

- It’s a related-key attack, which requires the cryptanalyst to have access to plaintexts encrypted with multiple keys that are related in a specific way.

- The attack only breaks 11 rounds of AES-256. Full AES-256 has 14 rounds.

Not much comfort there, I agree. But it’s what we have.

Cryptography is all about safety margins. If you can break n round of a cipher, you design it with 2n or 3n rounds. What we’re learning is that the safety margin of AES is much less than previously believed. And while there is no reason to scrap AES in favor of another algorithm, NST should increase the number of rounds of all three AES variants. At this point, I suggest AES-128 at 16 rounds, AES-192 at 20 rounds, and AES-256 at 28 rounds. Or maybe even more; we don’t want to be revising the standard again and again.

And for new applications I suggest that people don’t use AES-256. AES-128 provides more than enough security margin for the forseeable future. But if you’re already using AES-256, there’s no reason to change.

The paper I have is still a draft. It is being circulated among cryptographers, and should be online in a couple of days. I will post the link as soon as I have it.

UPDATED TO ADD (8/3): The paper is public.

Risks of Cloud Computing

Excellent essay by Jonathan Zittrain on the risks of cloud computing:

The cloud, however, comes with real dangers.

Some are in plain view. If you entrust your data to others, they can let you down or outright betray you. For example, if your favorite music is rented or authorized from an online subscription service rather than freely in your custody as a compact disc or an MP3 file on your hard drive, you can lose your music if you fall behind on your payments—or if the vendor goes bankrupt or loses interest in the service. Last week Amazon apparently conveyed a publisher’s change-of-heart to owners of its Kindle e-book reader: some purchasers of Orwell’s “1984” found it removed from their devices, with nothing to show for their purchase other than a refund. (Orwell would be amused.)

Worse, data stored online has less privacy protection both in practice and under the law. A hacker recently guessed the password to the personal e-mail account of a Twitter employee, and was thus able to extract the employee’s Google password. That in turn compromised a trove of Twitter’s corporate documents stored too conveniently in the cloud. Before, the bad guys usually needed to get their hands on people’s computers to see their secrets; in today’s cloud all you need is a password.

Thanks in part to the Patriot Act, the federal government has been able to demand some details of your online activities from service providers—and not to tell you about it. There have been thousands of such requests lodged since the law was passed, and the F.B.I.’s own audits have shown that there can be plenty of overreach—perhaps wholly inadvertent—in requests like these.

Here’s me on cloud computing.

iPhone Encryption Useless

Interesting, although I want some more technical details.

…the new iPhone 3GS’ encryption feature is “broken” when it comes to protecting sensitive information such as credit card numbers and social-security digits, Zdziarski said.

Zdziarski said it’s just as easy to access a user’s private information on an iPhone 3GS as it was on the previous generation iPhone 3G or first generation iPhone, both of which didn’t feature encryption. If a thief got his hands on an iPhone, a little bit of free software is all that’s needed to tap into all of the user’s content. Live data can be extracted in as little as two minutes, and an entire raw disk image can be made in about 45 minutes, Zdziarski said.

Wondering where the encryption comes into play? It doesn’t. Strangely, once one begins extracting data from an iPhone 3GS, the iPhone begins to decrypt the data on its own, he said.

New Real Estate Scam

Nigerian scammers find homes listed for sale on these public search sites, copy the pictures and listings verbatim, and then post the information onto Craigslist under available housing rentals, without the consent or knowledge of Craigslist, who has been notified.

After the posting is listed, unsuspecting individuals contact the poster, who is Nigerian, for more information on the “rental.” The Nigerian scammer will state that they had to leave the country very quickly to do missionary or contract work in Africa and were unable to rent their house before leaving, therefore they have to take care of this remotely. The “homeowner” sends the prospective renter an application and tells them to send them first and last month’s rent to the Nigerian scammer via Western Union. The prospective renter is further told If they “qualify,” they will send them the keys for their house. Once the money is wired to the scammer, they show up at the house, see the home is actually for sale, are unable to access the property, and their money is gone.

Large Signs a Security Risk

A large sign saying “United States” at a border crossing was deemed a security risk:

Yet three weeks ago, less than a month after the station opened, workers began prying the big yellow letters off the building’s facade on orders from Customs and Border Protection. The plan is to dismantle the rest of the sign this week.

“At the end of the day, I think they were somewhat surprised at how bold and how bright it was,” said Les Shepherd, the chief architect of the General Services Administration, referring to the customs agency’s sudden turnaround.

“There were security concerns,” said Kelly Ivahnenko, a spokeswoman for the customs agency. “The sign could be a huge target and attract undue attention. Anything that would place our officers at risk we need to avoid.”

The move is a depressing, if not wholly unpredictable, example of how the lingering trauma of 9/11 can make it difficult for government bureaucracies to make rational decisions. It reflects a tendency to focus on worst-case scenarios to the exclusion of common sense, as well as a fundamental misreading of the sign and the message it conveys. And if it is carried out as planned, it will gut a design whose playful pop aesthetic is an inspired expression of what America is about.

Exactly.

Swiss Security Problem: Storing Gold

Seems like the Swiss may be running out of secure gold storage. If this is true, it’s a real security issue. You can’t just store the stuff behind normal locks. Building secure gold storage takes time and money.

I am reminded of a related problem the EU had during the transition to the euro: where to store all the bills and coins before the switchover date. There wasn’t enough vault space in banks, because the vast majority of currency is in circulation. It’s a similar problem, although the EU banks could solve theirs with lots of guards, because it was only a temporary problem.

Tips for Staying Safe Online

This is funny:

Tips for Staying Safe Online

All citizens can follow a few simple guidelines to keep themselves safe in cyberspace. In doing so, they not only protect their personal information but also contribute to the security of cyberspace.

- Install anti-virus software, a firewall, and anti-spyware software to your computer, and update as necessary.

- Create strong passwords on your electronic devices and change them often. Never record your password or provide it to someone else.

- Back up important files.

- Ignore suspicious e-mail and never click on links asking for personal information.

- Only open attachments if you’re expecting them and know what they contain.

- If shelter is not available, lie flat in a ditch or other low-lying area. Do not get under an overpass or bridge. You are safer in a low, flat location.

- Additional tips are available at www.staysafeonline.org.

Those must be some pretty nasty attachments.

Here’s the current version of the page, with the misplaced bullet point removed. And here’s where it was copied and pasted from.

Base Rate Fallacy

Nice description of the base rate fallacy.

Friday Squid Blogging: Humboldt Squid Invasion

Thousands of jumbo flying squid, aggressive 5-foot-long sea monsters with razor-sharp beaks and toothy tentacles, have invaded the shallow waters off San Diego, spooking scuba divers and washing up dead on beaches.

They’re aggressive:

One diver described how one of the rust-coloured creatures ripped the buoyancy aid and light from her chest, and grabbed her with its tentacles.

…a powerful, outsize squid that features eight snakelike arms lined with suckers full of nasty little teeth, a razor-sharp beak that can rapidly rip flesh into bite-size chunks, and an unrelenting hunger. It’s called the Humboldt, or jumbo, squid, and it’s not the sort of calamari you’re used to forking off your dinner plate. This squid grows to seven feet or more and perhaps a couple hundred pounds. It has a rep as the outlaw biker of the marine world: intelligent and opportunistic, a stone-cold cannibal willing to attack divers with a seemingly deliberate hostility.

[…]

Humboldts—mostly five-footers—swarmed around him. As Cassell tells it, one attacked his camera, which smashed into his face, while another wrapped itself around his head and yanked hard on his right arm, dislocating his shoulder. A third bit into his chest, and as he tried to protect himself he was gang-dragged so quickly from 30 to 70 feet that he didn’t have time to equalize properly, and his right eardrum ruptured. “I was in the water five minutes and I already had my first injury,” Cassell recalls, shaking his head. “It was like being in a barroom brawl.” Somehow he managed to push the squid-pile off and make his way to the surface, battered and exhilarated. “I was in love with the animal,” he says.

That article is a really fun read.

This isn’t the first time they’ve invaded the waters of Southern California, and they’ve been spotted as North as Seattle.

Info on cooking them.

SHA-3 Second Round Candidates Announced

NIST has announced the 14 SHA-3 candidates that have advanced to the second round: BLAKE, Blue Midnight Wish, CubeHash, ECHO, Fugue, Grøstl, Hamsi, JH, Keccak, Luffa, Shabal, SHAvite-3, SIMD, and Skein.

In February, I chose my favorites: Arirang, BLAKE, Blue Midnight Wish, ECHO, Grøstl, Keccak, LANE, Shabal, and Skein. Of the ones NIST eventually chose, I am most surprised to see CubeHash and most surprised not to see LANE.

Here’s my 2008 essay on SHA-3. Here’s NIST’s SHA-3 page. And here’s the page on my own submission, Skein.

Social Security Numbers are Not Random

Social Security Numbers are not random. In some cases, you can predict them with date and place of birth.

Information about an individual’s place and date of birth can be exploited to predict his or her Social Security number (SSN). Using only publicly available information, we observed a correlation between individuals’ SSNs and their birth data and found that for younger cohorts the correlation allows statistical inference of private SSNs. The inferences are made possible by the public availability of the Social Security Administration’s Death Master File and the widespread accessibility of personal information from multiple sources, such as data brokers or profiles on social networking sites. Our results highlight the unexpected privacy consequences of the complex interactions among multiple data sources in modern information economies and quantify privacy risks associated with information revelation in public forums.

I don’t see any new insecurities here. We already know that Social Security Numbers are not secrets. And anyone who wants to steal a million SSNs is much more likely to break into one of the gazillion databases out there that store them.

The Twitter Attack

Excellent article detailing the Twitter attack.

Mapping Drug Use by Testing Sewer Water

I wrote about this in 2007, but there’s new research:

Scientists from Oregon State University, the University of Washington and McGill University partnered with city workers in 96 communities, including Pendleton, Hermiston and Umatilla, to gather samples on one day, March 4, 2008. The scientists then tested the samples for evidence of methamphetamine, cocaine and ecstasy, or MDMA.

Addiction specialists were not surprised by the researchers’ central discovery, that every one of the 96 cities—representing 65 percent of Oregon’s population—had a quantifiable level of methamphetamine in its wastewater.

“This validates what we suspected about methamphetamine use in Oregon,” said Geralyn Brennan, addiction prevention epidemiologist for the Department of Human Services.

Drug researchers previously determined the extent of illicit drug use through mortality records and random surveys, which are not considered entirely reliable. Survey respondents may not accurately recall how much or how often they use illicit drugs and they may not be willing to tell the truth. Surveys also gathered information about large regions of the state, not individual cities.

The data gathered from municipal wastewater, however, are concrete and reveal a detailed snapshot of drug use for that day. Researchers placed cities into ranks based on a drug’s “index load” – average milligrams per person per day.

These techniques can detect drug usage at individual houses. It’s just a matter of where you take your samples.

Verifiable Dismantling of Nuclear Bombs

Cryptography has zero-knowledge proofs, where Alice can prove to Bob that she knows something without revealing it to Bob. Here’s something similar from the real world. It’s a research project to allow weapons inspectors from one nation to verify the disarming of another nation’s nuclear weapons without learning any weapons secrets in the process, such as the amount of nuclear material in the weapon.

Cybercrime Paper

“Distributed Security: A New Model of Law Enforcement,” Susan W. Brenner and Leo L. Clarke.

Abstract:

Cybercrime, which is rapidly increasing in frequency and in severity, requires us to rethink how we should enforce our criminal laws. The current model of reactive, police-based enforcement, with its origins in real-world urbanization, does not and cannot protect society from criminals using computer technology. This article proposes a new model of distributed security that can supplement the traditional model and allow us to deal effectively with cybercrime. The new model employs criminal sanctions, primarily fines, to induce computer users and those who provide access to cyberspace to employ reasonable security measures as deterrents. We argue that criminal sanctions are preferable in this context to civil liability, and we suggest a system of administrative regulation backed by criminal sanctions that will provide the incentives necessary to create a workable deterrent to cybercrime.

It’s from 2005, but I’ve never seen it before.

Friday Squid Blogging: Bottled Water Plus Squid

Only in Japan:

Bandai toy company from Japan has finally realized that bottles of water just aren’t cute. As Japan is the cute capital of the world, this just wouldn’t do. To fix the problem, they developed these adorable floating squids that can be added to any bottle of water. Thank god for Japanese innovation. Of course, they’re only available in Japan, but at least they’re affordable at only $6 each.

Pepper Spray–Equipped ATMs

South Africa takes its security seriously. Here’s an ATM that automatically squirts pepper spray into the face of “people tampering with the card slots.”

Sounds cool, but these kinds of things are all about false positives:

But the mechanism backfired in one incident last week when pepper spray was inadvertently inhaled by three technicians who required treatment from paramedics.

Patrick Wadula, spokesman for the Absa bank, which is piloting the scheme, told the Mail & Guardian Online: “During a routine maintenance check at an Absa ATM in Fish Hoek, the pepper spray device was accidentally activated.

“At the time there were no customers using the ATM. However, the spray spread into the shopping centre where the ATMs are situated.”

Privacy Salience and Social Networking Sites

Reassuring people about privacy makes them more, not less, concerned. It’s called “privacy salience,” and Leslie John, Alessandro Acquisti, and George Loewenstein—all at Carnegie Mellon University—demonstrated this in a series of clever experiments. In one, subjects completed an online survey consisting of a series of questions about their academic behavior—”Have you ever cheated on an exam?” for example. Half of the subjects were first required to sign a consent warning—designed to make privacy concerns more salient—while the other half did not. Also, subjects were randomly assigned to receive either a privacy confidentiality assurance, or no such assurance. When the privacy concern was made salient (through the consent warning), people reacted negatively to the subsequent confidentiality assurance and were less likely to reveal personal information.

In another experiment, subjects completed an online survey where they were asked a series of personal questions, such as “Have you ever tried cocaine?” Half of the subjects completed a frivolous-looking survey—”How BAD are U??”—with a picture of a cute devil. The other half completed the same survey with the title “Carnegie Mellon University Survey of Ethical Standards,” complete with a university seal and official privacy assurances. The results showed that people who were reminded about privacy were less likely to reveal personal information than those who were not.

Privacy salience does a lot to explain social networking sites and their attitudes towards privacy. From a business perspective, social networking sites don’t want their members to exercise their privacy rights very much. They want members to be comfortable disclosing a lot of data about themselves.

Joseph Bonneau and Soeren Preibusch of Cambridge University have been studying privacy on 45 popular social networking sites around the world. (You may not have realized that there are 45 popular social networking sites around the world.) They found that privacy settings were often confusing and hard to access; Facebook, with its 61 privacy settings, is the worst. To understand some of the settings, they had to create accounts with different settings so they could compare the results. Privacy tends to increase with the age and popularity of a site. General-use sites tend to have more privacy features than niche sites.

But their most interesting finding was that sites consistently hide any mentions of privacy. Their splash pages talk about connecting with friends, meeting new people, sharing pictures: the benefits of disclosing personal data.

These sites do talk about privacy, but only on hard-to-find privacy policy pages. There, the sites give strong reassurances about their privacy controls and the safety of data members choose to disclose on the site. There, the sites display third-party privacy seals and other icons designed to assuage any fears members have.

It’s the Carnegie Mellon experimental result in the real world. Users care about privacy, but don’t really think about it day to day. The social networking sites don’t want to remind users about privacy, even if they talk about it positively, because any reminder will result in users remembering their privacy fears and becoming more cautious about sharing personal data. But the sites also need to reassure those “privacy fundamentalists” for whom privacy is always salient, so they have very strong pro-privacy rhetoric for those who take the time to search them out. The two different marketing messages are for two different audiences.

Social networking sites are improving their privacy controls as a result of public pressure. At the same time, there is a counterbalancing business pressure to decrease privacy; watch what’s going on right now on Facebook, for example. Naively, we should expect companies to make their privacy policies clear to allow customers to make an informed choice. But the marketing need to reduce privacy salience will frustrate market solutions to improve privacy; sites would much rather obfuscate the issue than compete on it as a feature.

This essay originally appeared in the Guardian.

Laptop Security while Crossing Borders

Last year, I wrote about the increasing propensity for governments, including the U.S. and Great Britain, to search the contents of people’s laptops at customs. What we know is still based on anecdote, as no country has clarified the rules about what their customs officers are and are not allowed to do, and what rights people have.

Companies and individuals have dealt with this problem in several ways, from keeping sensitive data off laptops traveling internationally, to storing the data—encrypted, of course—on websites and then downloading it at the destination. I have never liked either solution. I do a lot of work on the road, and need to carry all sorts of data with me all the time. It’s a lot of data, and downloading it can take a long time. Also, I like to work on long international flights.

There’s another solution, one that works with whole-disk encryption products like PGP Disk (I’m on PGP’s advisory board), TrueCrypt, and BitLocker: Encrypt the data to a key you don’t know.

It sounds crazy, but stay with me. Caveat: Don’t try this at home if you’re not very familiar with whatever encryption product you’re using. Failure results in a bricked computer. Don’t blame me.

Step One: Before you board your plane, add another key to your whole-disk encryption (it’ll probably mean adding another “user”)—and make it random. By “random,” I mean really random: Pound the keyboard for a while, like a monkey trying to write Shakespeare. Don’t make it memorable. Don’t even try to memorize it.

Technically, this key doesn’t directly encrypt your hard drive. Instead, it encrypts the key that is used to encrypt your hard drive—that’s how the software allows multiple users.

So now there are two different users named with two different keys: the one you normally use, and some random one you just invented.

Step Two: Send that new random key to someone you trust. Make sure the trusted recipient has it, and make sure it works. You won’t be able to recover your hard drive without it.

Step Three: Burn, shred, delete or otherwise destroy all copies of that new random key. Forget it. If it was sufficiently random and non-memorable, this should be easy.

Step Four: Board your plane normally and use your computer for the whole flight.

Step Five: Before you land, delete the key you normally use.

At this point, you will not be able to boot your computer. The only key remaining is the one you forgot in Step Three. There’s no need to lie to the customs official; you can even show him a copy of this article if he doesn’t believe you.

Step Six: When you’re safely through customs, get that random key back from your confidant, boot your computer and re-add the key you normally use to access your hard drive.

And that’s it.

This is by no means a magic get-through-customs-easily card. Your computer might be impounded, and you might be taken to court and compelled to reveal who has the random key.

But the purpose of this protocol isn’t to prevent all that; it’s just to deny any possible access to your computer to customs. You might be delayed. You might have your computer seized. (This will cost you any work you did on the flight, but—honestly—at that point that’s the least of your troubles.) You might be turned back or sent home. But when you’re back home, you have access to your corporate management, your personal attorneys, your wits after a good night’s sleep, and all the rights you normally have in whatever country you’re now in.

This procedure not only protects you against the warrantless search of your data at the border, it also allows you to deny a customs official your data without having to lie or pretend—which itself is often a crime.

Now the big question: Who should you send that random key to?

Certainly it should be someone you trust, but—more importantly—it should be someone with whom you have a privileged relationship. Depending on the laws in your country, this could be your spouse, your attorney, your business partner or your priest. In a larger company, the IT department could institutionalize this as a policy, with the help desk acting as the key holder.

You could also send it to yourself, but be careful. You don’t want to e-mail it to your webmail account, because then you’d be lying when you tell the customs official that there is no possible way you can decrypt the drive.

You could put the key on a USB drive and send it to your destination, but there are potential failure modes. It could fail to get there in time to be waiting for your arrival, or it might not get there at all. You could airmail the drive with the key on it to yourself a couple of times, in a couple of different ways, and also fax the key to yourself … but that’s more work than I want to do when I’m traveling.

If you only care about the return trip, you can set it up before you return. Or you can set up an elaborate one-time pad system, with identical lists of keys with you and at home: Destroy each key on the list you have with you as you use it.

Remember that you’ll need to have full-disk encryption, using a product such as PGP Disk, TrueCrypt or BitLocker, already installed and enabled to make this work.

I don’t think we’ll ever get to the point where our computer data is safe when crossing an international border. Even if countries like the U.S. and Britain clarify their rules and institute privacy protections, there will always be other countries that will exercise greater latitude with their authority. And sometimes protecting your data means protecting your data from yourself.

This essay originally appeared on Wired.com.

Data Leakage Through Power Lines

The NSA has known about this for decades:

Security researchers found that poor shielding on some keyboard cables means useful data can be leaked about each character typed.

By analysing the information leaking onto power circuits, the researchers could see what a target was typing.

The attack has been demonstrated to work at a distance of up to 15m, but refinement may mean it could work over much longer distances.

These days, there’s lots of open research on side channels.

Poor Man's Steganography

Hide files inside pdf documents: “embed a file in a PDF document and corrupt the reference, thereby effectively making the embedded file invisible to the PDF reader.”

Gaze Tracking Software Protecting Privacy

Interesting use of gaze tracking software to protect privacy:

Chameleon uses gaze-tracking software and camera equipment to track an authorized reader’s eyes to show only that one person the correct text. After a 15-second calibration period in which the software essentially “learns” the viewer’s gaze patterns, anyone looking over that user’s shoulder sees dummy text that randomly and constantly changes.

To tap the broader consumer market, Anderson built a more consumer-friendly version called PrivateEye, which can work with a simple Webcam. The software blurs a user’s monitor when he or she turns away. It also detects other faces in the background, and a small video screen pops up to alert the user that someone is looking at the screen.

How effective this is will mostly be a usability problem, but I like the idea of a system detecting if anyone else is looking at my screen.

Slashdot story.

EDITED TO ADD (7/14): A demo.

North Korean Cyberattacks

To hear the media tell it, the United States suffered a major cyberattack last week. Stories were everywhere. "Cyber Blitz hits U.S., Korea" was the headline in Thursday’s Wall Street Journal. North Korea was blamed.

Where were you when North Korea attacked America? Did you feel the fury of North Korea’s armies? Were you fearful for your country? Or did your resolve strengthen, knowing that we would defend our homeland bravely and valiantly?

My guess is that you didn’t even notice, that—if you didn’t open a newspaper or read a news website—you had no idea anything was happening. Sure, a few government websites were knocked out, but that’s not alarming or even uncommon. Other government websites were attacked but defended themselves, the sort of thing that happens all the time. If this is what an international cyberattack looks like, it hardly seems worth worrying about at all.

Politically motivated cyber attacks are nothing new. We’ve seen UK vs. Ireland. Israel vs. the Arab states. Russia vs. several former Soviet Republics. India vs. Pakistan, especially after the nuclear bomb tests in 1998. China vs. the United States, especially in 2001 when a U.S. spy plane collided with a Chinese fighter jet. And so on and so on.

The big one happened in 2007, when the government of Estonia was attacked in cyberspace following a diplomatic incident with Russia about the relocation of a Soviet World War II memorial. The networks of many Estonian organizations, including the Estonian parliament, banks, ministries, newspapers and broadcasters, were attacked and—in many cases—shut down. Estonia was quick to blame Russia, which was equally quick to deny any involvement.

It was hyped as the first cyberwar, but after two years there is still no evidence that the Russian government was involved. Though Russian hackers were indisputably the major instigators of the attack, the only individuals positively identified have been young ethnic Russians living inside Estonia, who were angry over the statue incident.

Poke at any of these international incidents, and what you find are kids playing politics. Last Wednesday, South Korea’s National Intelligence Service admitted that it didn’t actually know that North Korea was behind the attacks: "North Korea or North Korean sympathizers in the South" was what it said. Once again, it’ll be kids playing politics.

This isn’t to say that cyberattacks by governments aren’t an issue, or that cyberwar is something to be ignored. The constant attacks by Chinese nationals against U.S. networks may not be government-sponsored, but it’s pretty clear that they’re tacitly government-approved. Criminals, from lone hackers to organized crime syndicates, attack networks all the time. And war expands to fill every possible theater: land, sea, air, space, and now cyberspace. But cyberterrorism is nothing more than a media invention designed to scare people. And for there to be a cyberwar, there first needs to be a war.

Israel is currently considering attacking Iran in cyberspace, for example. If it tries, it’ll discover that attacking computer networks is an inconvenience to the nuclear facilities it’s targeting, but doesn’t begin to substitute for bombing them.

In May, President Obama gave a major speech on cybersecurity. He was right when he said that cybersecurity is a national security issue, and that the government needs to step up and do more to prevent cyberattacks. But he couldn’t resist hyping the threat with scare stories: "In one of the most serious cyber incidents to date against our military networks, several thousand computers were infected last year by malicious software—malware," he said. What he didn’t add was that those infections occurred because the Air Force couldn’t be bothered to keep its patches up to date.

This is the face of cyberwar: easily preventable attacks that, even when they succeed, only a few people notice. Even this current incident is turning out to be a sloppily modified five-year-old worm that no modern network should still be vulnerable to.

Securing our networks doesn’t require some secret advanced NSA technology. It’s the boring network security administration stuff we already know how to do: keep your patches up to date, install good anti-malware software, correctly configure your firewalls and intrusion-detection systems, monitor your networks. And while some government and corporate networks do a pretty good job at this, others fail again and again.

Enough of the hype and the bluster. The news isn’t the attacks, but that some networks had security lousy enough to be vulnerable to them.

This essay originally appeared on the Minnesota Public Radio website.

Strong Web Passwords

Interesting paper from HotSec ’07: “Do Strong Web Passwords Accomplish Anything?” by Dinei Florêncio, Cormac Herley, and Baris Coskun.

ABSTRACT: We find that traditional password advice given to users is somewhat dated. Strong passwords do nothing to protect online users from password stealing attacks such as phishing and keylogging, and yet they place considerable burden on users. Passwords that are too weak of course invite brute-force attacks. However, we find that relatively weak passwords, about 20 bits or so, are sufficient to make brute-force attacks on a single account unrealistic so long as a “three strikes” type rule is in place. Above that minimum it appears that increasing password strength does little to address any real threat If a larger credential space is needed it appears better to increase the strength of the user ID’s rather than the passwords. For large institutions this is just as effective in deterring bulk guessing attacks and is a great deal better for users. For small institutions there appears little reason to require strong passwords for online accounts.

Lost Suitcases in Airport Restrooms

Want to cause chaos at an airport? Leave a suitcase in the restroom:

Three incoming flights from London were cancelled and about 150 others were delayed for up to three hours, while the army’s bomb squad carried out its investigation, before giving the all-clear at about 5pm.

Passengers were told to leave the arrivals hall, main check-in area at the terminal building, the food courts and shops, and gather at safety areas outside.

The scare led to major traffic disruption around the airport, with tailbacks stretching back about a mile. Some passengers faced lengthy walks to the airport after being dropped off by shuttle bus from the city centre.

Oddest quote is from a police spokesperson:

“Inquires are under way to establish how the luggage came to be located within the toilets.”

My guess is that someone left it there.

I’d suggest this as a good denial-of-service attack, but certainly there is a video camera recording of the person bringing the suitcase into the airport. The article says it was left in the “domestic arrivals area.” I don’t know if that’s inside airport security or not.

Making an Operating System Virus Free

Commenting on Google’s claim that Chrome was designed to be virus-free, I said:

Bruce Schneier, the chief security technology officer at BT, scoffed at Google’s promise. “It’s an idiotic claim,” Schneier wrote in an e-mail. “It was mathematically proved decades ago that it is impossible—not an engineering impossibility, not technologically impossible, but the 2+2=3 kind of impossible—to create an operating system that is immune to viruses.”

What I was referring to, although I couldn’t think of his name at the time, was Fred Cohen’s 1986 Ph.D. thesis where he proved that it was impossible to create a virus-checking program that was perfect. That is, it is always possible to write a virus that any virus-checking program will not detect.

This reaction to my comment is accurate:

That seems to us like he’s picking on the semantics of Google’s statement just a bit. Google says that users “won’t have to deal with viruses,” and Schneier is noting that it’s simply not possible to create an OS that can’t be taken down by malware. While that may be the case, it’s likely that Chrome OS is going to be arguably more secure than the other consumer operating systems currently in use today. In fact, we didn’t take Google’s statement to mean that Chrome OS couldn’t get a virus EVER; we just figured they meant it was a lot harder to get one on their new OS – didn’t you?

When I said that I had not seen Google’s statement. I was responding to what the reporter was telling me on the phone. So yes, I jumped on the reporter’s claim about Google’s claim. I did try to temper my comment:

Redesigning an operating system from scratch, “[taking] security into account all the way up and down,” could make for a more secure OS than ones that have been developed so far, Schneier said. But that’s different from Google’s promise that users won’t have to deal with viruses or malware, he added.

To summarize, there is a lot that can be done in an OS to reduce the threat of viruses and other malware. If the Chrome team started from scratch and took security seriously all through the design and development process, they have to potential to develop something really secure. But I don’t know if they did.

NSA Building Massive Data Center in Utah

They’re expanding:

The years-in-the-making project, which may cost billions over time, got a $181 million start last week when President Obama signed a war spending bill in which Congress agreed to pay for primary construction, power access and security infrastructure. The enormous building, which will have a footprint about three times the size of the Utah State Capitol building, will be constructed on a 200-acre site near the Utah National Guard facility’s runway.

Congressional records show that initial construction—which may begin this year—will include tens of millions in electrical work and utility construction, a $9.3 million vehicle inspection facility, and $6.8 million in perimeter security fencing. The budget also allots $6.5 million for the relocation of an existing access road, communications building and training area.

Officials familiar with the project say it may bring as many as 1,200 high-tech jobs….

It will also require at least 65 megawatts of power….

Another article.

The ATM Vulnerability You Won't Hear About

The talk has been pulled from the BlackHat conference:

Barnaby Jack, a researcher with Juniper Networks, was to present a demonstration showing how he could jackpot a popular ATM brand by exploiting a vulnerability in its software.

Jack was scheduled to present his talk at the upcoming Black Hat security conference being held in Las Vegas at the end of July.

But on Monday evening, his employer released a statement saying it was canceling the talk due to the vendor’s intervention.

More:

“The vulnerability Barnaby was to discuss has far reaching consequences, not only to the affected ATM vendor, but to other ATM vendors and—ultimately—the public,” wrote Brendan Lewis, director of corporate social media relations for Juniper in a statement posted to the company’s official blog last week. “To publicly disclose the research findings before the affected vendor could properly mitigate the exposure would have potentially placed their customers at risk. That is something we don’t want to see happen.”

Homomorphic Encryption Breakthrough

Last month, IBM made some pretty brash claims about homomorphic encryption and the future of security. I hate to be the one to throw cold water on the whole thing—as cool as the new discovery is—but it’s important to separate the theoretical from the practical.

Homomorphic cryptosystems are ones where mathematical operations on the ciphertext have regular effects on the plaintext. A normal symmetric cipher—DES, AES, or whatever—is not homomorphic. Assume you have a plaintext P, and you encrypt it with AES to get a corresponding ciphertext C. If you multiply that ciphertext by 2, and then decrypt 2C, you get random gibberish instead of P. If you got something else, like 2P, that would imply some pretty strong nonrandomness properties of AES and no one would trust its security.

The RSA algorithm is different. Encrypt P to get C, multiply C by 2, and then decrypt 2C—and you get 2P. That’s a homomorphism: perform some mathematical operation to the ciphertext, and that operation is reflected in the plaintext. The RSA algorithm is homomorphic with respect to multiplication, something that has to be taken into account when evaluating the security of a security system that uses RSA.

This isn’t anything new. RSA’s homomorphism was known in the 1970s, and other algorithms that are homomorphic with respect to addition have been known since the 1980s. But what has eluded cryptographers is a fully homomorphic cryptosystem: one that is homomorphic under both addition and multiplication and yet still secure. And that’s what IBM researcher Craig Gentry has discovered.

This is a bigger deal than might appear at first glance. Any computation can be expressed as a Boolean circuit: a series of additions and multiplications. Your computer consists of a zillion Boolean circuits, and you can run programs to do anything on your computer. This algorithm means you can perform arbitrary computations on homomorphically encrypted data. More concretely: if you encrypt data in a fully homomorphic cryptosystem, you can ship that encrypted data to an untrusted person and that person can perform arbitrary computations on that data without being able to decrypt the data itself. Imagine what that would mean for cloud computing, or any outsourcing infrastructure: you no longer have to trust the outsourcer with the data.

Unfortunately—you knew that was coming, right?—Gentry’s scheme is completely impractical. It uses something called an ideal lattice as the basis for the encryption scheme, and both the size of the ciphertext and the complexity of the encryption and decryption operations grow enormously with the number of operations you need to perform on the ciphertext—and that number needs to be fixed in advance. And converting a computer program, even a simple one, into a Boolean circuit requires an enormous number of operations. These aren’t impracticalities that can be solved with some clever optimization techniques and a few turns of Moore’s Law; this is an inherent limitation in the algorithm. In one article, Gentry estimates that performing a Google search with encrypted keywords—a perfectly reasonable simple application of this algorithm—would increase the amount of computing time by about a trillion. Moore’s law calculates that it would be 40 years before that homomorphic search would be as efficient as a search today, and I think he’s being optimistic with even this most simple of examples.

Despite this, IBM’s PR machine has been in overdrive about the discovery. Its press release makes it sound like this new homomorphic scheme is going to rewrite the business of computing: not just cloud computing, but “enabling filters to identify spam, even in encrypted email, or protection information contained in electronic medical records.” Maybe someday, but not in my lifetime.

This is not to take anything away anything from Gentry or his discovery. Visions of a fully homomorphic cryptosystem have been dancing in cryptographers’ heads for thirty years. I never expected to see one. It will be years before a sufficient number of cryptographers examine the algorithm that we can have any confidence that the scheme is secure, but—practicality be damned—this is an amazing piece of work.

Spanish Police Foil Remote-Controlled Zeppelin Jailbreak

Sometimes movie plots actually happen:

…three people have been arrested after police discovered their plan to free a drug trafficker from an island prison using a 13-foot airship carrying night goggles, climbing gear and camouflage paint.

[…]

The arrested men had setup an elaborate surveillance operation of the prison that involved a camouflaged tent, powerful binoculars, telephoto lenses, and motion detection sensors. But authorities caught wind of the plan when they intercepted the inflatable zeppelin as it arrived from the Italian town of Bergamo.

EDITED TO ADD (7/14): Another story, with more detail.

Court Limits on TSA Searches

This is good news:

A federal judge in June threw out seizure of three fake passports from a traveler, saying that TSA screeners violated his Fourth Amendment rights against unreasonable search and seizure. Congress authorizes TSA to search travelers for weapons and explosives; beyond that, the agency is overstepping its bounds, U.S. District Court Judge Algenon L. Marbley said.

“The extent of the search went beyond the permissible purpose of detecting weapons and explosives and was instead motivated by a desire to uncover contraband evidencing ordinary criminal wrongdoing,” Judge Marbley wrote.

In the second case, Steven Bierfeldt, treasurer for the Campaign for Liberty, a political organization launched from Ron Paul’s presidential run, was detained at the St. Louis airport because he was carrying $4,700 in a lock box from the sale of tickets, T-shirts, bumper stickers and campaign paraphernalia. TSA screeners quizzed him about the cash, his employment and the purpose of his trip to St. Louis, then summoned local police and threatened him with arrest because he responded to their questions with a question of his own: What were his rights and could TSA legally require him to answer?

[…]

Mr. Bierfeldt’s suit, filed in U.S. District Court in the District of Columbia, seeks to bar TSA from “conducting suspicion-less pre-flight searches of passengers or their belongings for items other than weapons or explosives.”

I wrote about this a couple of weeks ago:

…Obama should mandate that airport security be solely about terrorism, and not a general-purpose security checkpoint to catch everyone from pot smokers to deadbeat dads.

The Constitution provides us, both Americans and visitors to America, with strong protections against invasive police searches. Two exceptions come into play at airport security checkpoints. The first is “implied consent,” which means that you cannot refuse to be searched; your consent is implied when you purchased your ticket. And the second is “plain view,” which means that if the TSA officer happens to see something unrelated to airport security while screening you, he is allowed to act on that.

Both of these principles are well established and make sense, but it’s their combination that turns airport security checkpoints into police-state-like checkpoints.

The TSA should limit its searches to bombs and weapons and leave general policing to the police—where we know courts and the Constitution still apply.

Why People Don't Understand Risks

Yesterday’s Minneapolis Star Tribune had the front-page headline: “Co-sleeping kills about 20 infants each year.” (The headline in the web article is different.) The only problem is, in either case, there’s no additional information with which to make sense of the statistic.

How many infants don’t die each year? How many infants die each year in separate beds? Is the death rate for co-sleepers greater or less than the death rate for separate-bed sleepers? Without this information, it’s impossible to know whether this statistic is good or bad.

But the media rarely provides context for the data. The story is in the aftermath of an incident where a baby was accidentally smothered in his sleep.

Oh, and that 20-infants-per-year number is for Minnesota only. No word as to whether the situation is better or worse in other states.

More Low-Tech Security Solutions

Anti-theft lunch bags, for those who have a problem with their lunches being stolen.

Only works until the thief figures it out, though.

Pocketless Trousers to Protect Against Bribery

I wonder if it will work.

Nepal’s anti-corruption authority has come up with a novel solution to rampant bribe-taking at the country’s only international airport—the pocketless trouser.

The authority said it was issuing the new, bribe-proof garment to all airport officials after uncovering widespread corruption at Kathmandu’s Tribhuvan International Airport.

Terrorist Risk of Cloud Computing

I don’t even know where to begin on this one:

As we have seen in the past with other technologies, while cloud resources will likely start out decentralized, as time goes by and economies of scale take hold, they will start to collect into mega-technology hubs. These hubs could, as the end of this cycle, number in the low single digits and carry most of the commerce and data for a nation like ours. Elsewhere, particularly in Europe, those hubs could handle several nations’ public and private data.

And therein lays the risk.

The Twin Towers, which were destroyed in the 9/11 attack, took down a major portion of the U.S. infrastructure at the same time. The capability and coverage of cloud-based mega-hubs would easily dwarf hundreds of Twin Tower-like operations. Although some redundancy would likely exist—hopefully located in places safe from disasters—should a hub be destroyed, it could likely take down a significant portion of the country it supported at the same time.

[…]

Each hub may represent a target more attractive to terrorists than today’s favored nuclear power plants.

It’s only been eight years, and this author thinks that the 9/11 attacks “took down a major portion of the U.S. infrastructure.” That’s just plain ridiculous. I was there (in the U.S, not in New York). The government, the banks, the power system, commerce everywhere except lower Manhattan, the Internet, the water supply, the food supply, and every other part of the U.S. infrastructure I can think of worked just fine during and after the attacks. The New York Stock Exchange was up and running in a few days. Even the piece of our infrastructure that was the most disrupted—the airplane network—was up and running in a week. I think the author of that piece needs to travel to somewhere on the planet where major portions of the infrastructure actually get disrupted, so he can see what it’s like.

No less ridiculous is the main point of the article, which seems to imply that terrorists will someday decide that disrupting people’s Lands’ End purchases will be more attractive than killing them. Okay, that was a caricature of the article, but not by much. Terrorism is an attack against our minds, using random death and destruction as a tactic to cause terror in everyone. To even suggest that data disruption would cause more terror than nuclear fallout completely misunderstands terrorism and terrorists.

And anyway, any e-commerce, banking, etc. site worth anything is backed up and dual-homed. There are lots of risks to our data networks, but physically blowing up a data center isn’t high on the list.

The Pros and Cons of Password Masking

Usability guru Jakob Nielsen opened up a can of worms when he made the case for unmasking passwords in his blog. I chimed in that I agreed. Almost 165 comments on my blog (and several articles, essays, and many other blog posts) later, the consensus is that we were wrong.

I was certainly too glib. Like any security countermeasure, password masking has value. But like any countermeasure, password masking is not a panacea. And the costs of password masking need to be balanced with the benefits.

The cost is accuracy. When users don’t get visual feedback from what they’re typing, they’re more prone to make mistakes. This is especially true with character strings that have non-standard characters and capitalization. This has several ancillary costs:

- Users get pissed off.

- Users are more likely to choose easy-to-type passwords, reducing both mistakes and security. Removing password masking will make people more comfortable with complicated passwords: they’ll become easier to memorize and easier to use.

The benefits of password masking are more obvious:

- Security from shoulder surfing. If people can’t look over your shoulder and see what you’re typing, they’re much less likely to be able to steal your password. Yes, they can look at your fingers instead, but that’s much harder than looking at the screen. Surveillance cameras are also an issue: it’s easier to watch someone’s fingers on recorded video, but reading a cleartext password off a screen is trivial.

In some situations, there is a trust dynamic involved. Do you type your password while your boss is standing over your shoulder watching? How about your spouse or partner? Your parent or child? Your teacher or students? At ATMs, there’s a social convention of standing away from someone using the machine, but that convention doesn’t apply to computers. You might not trust the person standing next to you enough to let him see your password, but don’t feel comfortable telling him to look away. Password masking solves that social awkwardness.

- Security from screen scraping malware. This is less of an issue; keyboard loggers are more common and unaffected by password masking. And if you have that kind of malware on your computer, you’ve got all sorts of problems.

- A security “signal.” Password masking alerts users, and I’m thinking users who aren’t particularly security savvy, that passwords are a secret.

I believe that shoulder surfing isn’t nearly the problem it’s made out to be. One, lots of people use their computers in private, with no one looking over their shoulders. Two, personal handheld devices are used very close to the body, making shoulder surfing all that much harder. Three, it’s hard to quickly and accurately memorize a random non-alphanumeric string that flashes on the screen for a second or so.

This is not to say that shoulder surfing isn’t a threat. It is. And, as many readers pointed out, password masking is one of the reasons it isn’t more of a threat. And the threat is greater for those who are not fluent computer users: slow typists and people who are likely to choose bad passwords. But I believe that the risks are overstated.

Password masking is definitely important on public terminals with short PINs. (I’m thinking of ATMs.) The value of the PIN is large, shoulder surfing is more common, and a four-digit PIN is easy to remember in any case.

And lastly, this problem largely disappears on the Internet on your personal computer. Most browsers include the ability to save and then automatically populate password fields, making the usability problem go away at the expense of another security problem (the security of the password becomes the security of the computer). There’s a Firefox plugin that gets rid of password masking. And programs like my own Password Safe allow passwords to be cut and pasted into applications, also eliminating the usability problem.

One approach is to make it a configurable option. High-risk banking applications could turn password masking on by default; other applications could turn it off by default. Browsers in public locations could turn it on by default. I like this, but it complicates the user interface.

A reader mentioned BlackBerry’s solution, which is to display each character briefly before masking it; that seems like an excellent compromise.

I, for one, would like the option. I cannot type complicated WEP keys into Windows—twice! what’s the deal with that?—without making mistakes. I cannot type my rarely used and very complicated PGP keys without making a mistake unless I turn off password masking. That’s what I was reacting to when I said “I agree.”

So was I wrong? Maybe. Okay, probably. Password masking definitely improves security; many readers pointed out that they regularly use their computer in crowded environments, and rely on password masking to protect their passwords. On the other hand, password masking reduces accuracy and makes it less likely that users will choose secure and hard-to-remember passwords, I will concede that the password masking trade-off is more beneficial than I thought in my snap reaction, but also that the answer is not nearly as obvious as we have historically assumed.

The Insecurity of Secrecy

Good essay—”The Staggering Cost of Playing it ‘Safe’“—about the political motivations for terrorist security policy.

Senator Barbara Boxer has led an effort to at least put together a public database of ash storage sites so that people can judge the risk to the areas where they live. However, even this effort has been blocked not by coal companies or utilities, but by the DHS. How could it possibly be a national security interest to cover up the location of material that’s “not toxic or anything?” It’s not. In fact, even if the ash turns out to be as bad as its worst critics fear, blocking the database is far more dangerous than revealing the location of these sites. Not only has there not been any threat against these sites by terrorists, and no workable scenario by which they might cause a problem, coal slurry impoundments are already failing with regularity, dousing parts of America with millions of gallons of this material. It doesn’t take terrorists to make this happen.

Blocking the release of this information doesn’t protect the citizens of the United States in any way. It’s just another example of the same creeping secrecy that makes cities more difficult to manage because of secrecy over facilities. The same creeping secrecy that “blurs” national monuments from images and puts intentional gaps in public information. The same creeping secrecy that increasingly elevates the most unlikely attack—the shoe bombers of the world—above our right to know what’s going on around us so that we can make informed decisions. The same secrecy that defends torturers.

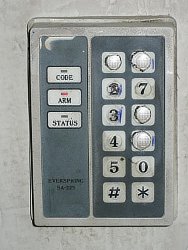

Information Leakage from Keypads

Can anyone guess the entry codes for these door locks?

There are 10,000 possible four-digit codes, but you only have to try 24 on these keypads. The first is most likely 1986 or 1968. The second is almost certainly 1234.

More Security Countermeasures from the Natural World

The plant caladium steudneriifolium pretends to be ill so mining moths won’t eat it.

She believes that the plant essentially fakes being ill, producing variegated leaves that mimic those that have already been damaged by mining moth larvae. That deters the moths from laying any further larvae on the leaves, as the insects assume the previous caterpillars have already eaten most of the leaves’ nutrients.

Cabbage aphids arm themselves with chemical bombs:

Its body carries two reactive chemicals that only mix when a predator attacks it. The injured aphid dies. But in the process, the chemicals in its body react and trigger an explosion that delivers lethal amounts of poison to the predator, saving the rest of the colony.

The dark-footed ant spider mimics an ant so that it’s not eaten by other spiders, and so it can eat spiders itself:

M.melanotarsa is a jumping spider that protects itself from predators (like other jumping spiders) by resembling an ant. Earlier this month, Ximena Nelson and Robert Jackson showed that they bolster this illusion by living in silken apartment complexes and travelling in groups, mimicking not just the bodies of ants but their social lives too.

Now Nelson and Robert are back with another side to the ant-spider’s tale – it also uses its impersonation for attack as well as defence. It also feasts on the eggs and youngsters of the very same spiders that its ant-like form protects it from. It is, essentially, a spider that looks like an ant to avoid being eaten by spiders so that it itself can eat spiders.

My previous post about security stories from the insect world.

MD6 Withdrawn from SHA-3 Competition

In other SHA-3 news, Ron Rivest seems to have withdrawn MD6 from the SHA-3 competition. From an e-mail to a NIST mailing list:

We suggest that MD6 is not yet ready for the next SHA-3 round, and we also provide some suggestions for NIST as the contest moves forward.

Basically, the issue is that in order for MD6 to be fast enough to be competitive, the designers have to reduce the number of rounds down to 30-40, and at those rounds, the algorithm loses its proofs of resistance to differential attacks.

Thus, while MD6 appears to be a robust and secure cryptographic hash algorithm, and has much merit for multi-core processors, our inability to provide a proof of security for a reduced-round (and possibly tweaked) version of MD6 against differential attacks suggests that MD6 is not ready for consideration for the next SHA-3 round.

EDITED TO ADD (7/1): This is a very classy withdrawal, as we expect from Ron Rivest—especially given the fact that there are no attacks on it, while other algorithms have been seriously broken and their submitters keep trying to pretend that no one has noticed.

EDITED TO ADD (7/6): From the MD6 website:

We are not withdrawing our submission; NIST is free to select MD6 for further consideration in the next round if it wishes. But at this point MD6 doesn’t meet our own standards for what we believe should be required of a SHA-3 candidate, and we suggest that NIST might do better looking elsewhere. In particular, we feel that a minimum “ticket of admission” for SHA-3 consideration should be a proof of resistance to basic differential attacks, and we don’t know how to make such a proof for a reduced-round MD6.

New Attack on AES

There’s a new cryptanalytic attack on AES that is better than brute force:

Abstract. In this paper we present two related-key attacks on the full AES. For AES-256 we show the first key recovery attack that works for all the keys and has complexity 2119, while the recent attack by Biryukov-Khovratovich-Nikolic works for a weak key class and has higher complexity. The second attack is the first cryptanalysis of the full AES-192. Both our attacks are boomerang attacks, which are based on the recent idea of finding local collisions in block ciphers and enhanced with the boomerang switching techniques to gain free rounds in the middle.

In an e-mail, the authors wrote:

We also expect that a careful analysis may reduce the complexities. As a preliminary result, we think that the complexity of the attack on AES-256 can be lowered from 2119 to about 2110.5 data and time.

We believe that these results may shed a new light on the design of the key-schedules of block ciphers, but they pose no immediate threat for the real world applications that use AES.

Agreed. While this attack is better than brute force—and some cryptographers will describe the algorithm as “broken” because of it—it is still far, far beyond our capabilities of computation. The attack is, and probably forever will be, theoretical. But remember: attacks always get better, they never get worse. Others will continue to improve on these numbers. While there’s no reason to panic, no reason to stop using AES, no reason to insist that NIST choose another encryption standard, this will certainly be a problem for some of the AES-based SHA-3 candidate hash functions.

EDITED TO ADD (7/14): An FAQ.

Security, Group Size, and the Human Brain

If the size of your company grows past 150 people, it’s time to get name badges. It’s not that larger groups are somehow less secure, it’s just that 150 is the cognitive limit to the number of people a human brain can maintain a coherent social relationship with.

Primatologist Robin Dunbar derived this number by comparing neocortex—the “thinking” part of the mammalian brain—volume with the size of primate social groups. By analyzing data from 38 primate genera and extrapolating to the human neocortex size, he predicted a human “mean group size” of roughly 150.

This number appears regularly in human society; it’s the estimated size of a Neolithic farming village, the size at which Hittite settlements split, and the basic unit in professional armies from Roman times to the present day. Larger group sizes aren’t as stable because their members don’t know each other well enough. Instead of thinking of the members as people, we think of them as groups of people. For such groups to function well, they need externally imposed structure, such as name badges.

Of course, badges aren’t the only way to determine in-group/out-group status. Other markers include insignia, uniforms, and secret handshakes. They have different security properties and some make more sense than others at different levels of technology, but once a group reaches 150 people, it has to do something.

More generally, there are several layers of natural human group size that increase with a ratio of approximately three: 5, 15, 50, 150, 500, and 1500—although, really, the numbers aren’t as precise as all that, and groups that are less focused on survival tend to be smaller. The layers relate to both the intensity and intimacy of relationship and the frequency of contact.

The smallest, three to five, is a “clique”: the number of people from whom you would seek help in times of severe emotional distress. The twelve to 20 group is the “sympathy group”: people with which you have special ties. After that, 30 to 50 is the typical size of hunter-gatherer overnight camps, generally drawn from the same pool of 150 people. No matter what size company you work for, there are only about 150 people you consider to be “co-workers.” (In small companies, Alice and Bob handle accounting. In larger companies, it’s the accounting department—and maybe you know someone there personally.) The 500-person group is the “megaband,” and the 1,500-person group is the “tribe.” Fifteen hundred is roughly the number of faces we can put names to, and the typical size of a hunter-gatherer society.

These numbers are reflected in military organization throughout history: squads of 10 to 15 organized into platoons of three to four squads, organized into companies of three to four platoons, organized into battalions of three to four companies, organized into regiments of three to four battalions, organized into divisions of two to three regiments, and organized into corps of two to three divisions.

Coherence can become a real problem once organizations get above about 150 in size. So as group sizes grow across these boundaries, they have more externally imposed infrastructure—and more formalized security systems. In intimate groups, pretty much all security is ad hoc. Companies smaller than 150 don’t bother with name badges; companies greater than 500 hire a guard to sit in the lobby and check badges. The military have had centuries of experience with this under rather trying circumstances, but even there the real commitment and bonding invariably occurs at the company level. Above that you need to have rank imposed by discipline.

The whole brain-size comparison might be bunk, and a lot of evolutionary psychologists disagree with it. But certainly security systems become more formalized as groups grow larger and their members less known to each other. When do more formal dispute resolution systems arise: town elders, magistrates, judges? At what size boundary are formal authentication schemes required? Small companies can get by without the internal forms, memos, and procedures that large companies require; when does what tend to appear? How does punishment formalize as group size increase? And how do all these things affect group coherence? People act differently on social networking sites like Facebook when their list of “friends” grows larger and less intimate. Local merchants sometimes let known regulars run up tabs. I lend books to friends with much less formality than a public library. What examples have you seen?

An edited version of this essay, without links, appeared in the July/August 2009 issue of IEEE Security & Privacy.

Sidebar photo of Bruce Schneier by Joe MacInnis.